Abstract

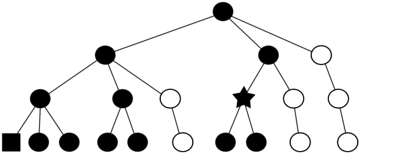

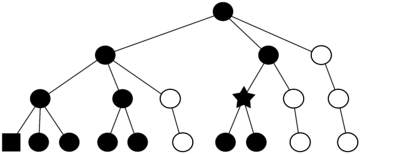

Branch-and-bound (B&B) feature selection finds optimal feature subsets without performing an exhaustive search. However, the classification accuracy achievable with optimal B&B feature subsets is often inferior compared to the accuracy achievable with other algorithms that guarantee optimality.

We argue this is due to the existing criterion functions that define the optimal feature subset but may not conceive inherent nonlinear data structures. Therefore, we propose B&B feature selection in Reproducing Kernel Hilbert Space (B&B-RKHS). This algorithm employs two existing criterion functions (Bhattacharyya distance, Kullback–Leibler divergence) and one new criterion function (mean class distance), however, all computed in RKHS. This enables B&B-RKHS to conceive inherent nonlinear data structures.

The algorithm was experimentally compared to the popular wrapper approach that has to use an exhaustive search to guarantee optimality. The classification accuracy achieved with both methods was comparable. However, runtime of B&B-RKHS was superior using the two existing criterion functions and even completely out of reach using the new criterion function (about 60 times faster on average). Therefore, this paper proposes an efficient algorithm if feature subsets that guarantee optimality have to be selected in data sets with inherent nonlinear structures.

Source Code

An implementation is available in the Embedded Classification Software Toolbox (ECST)

Publications

- , :

Optimal feature selection for nonlinear data using branch-and-bound in kernel space

In: Pattern Recognition Letters 68 (2015), p. 56-62

ISSN: 0167-8655

DOI: 10.1016/j.patrec.2015.08.007

BibTeX: Download