Project leader:

Project members:

Start date: 1. July 2017

End date: 30. June 2020

Funding source: Siemens AG

Abstract

Autonomous applied logistics, especially in context of Industry 4.0, is an important factor for fully automated systems. These systems involve autonomous operation of loading and unloading processes, safety measures by detecting persons in danger zones and the general optimization of the logistical processes.

Especially, the application of these systems in a harbor environment, where different systems from all over the world interact, increases the complexity of the loading and unloading processes. The aim of this research project is to determine the feasibility of automating the unloading process by segmenting, classifying and fitting laser range data by using Machine Learning techniques.

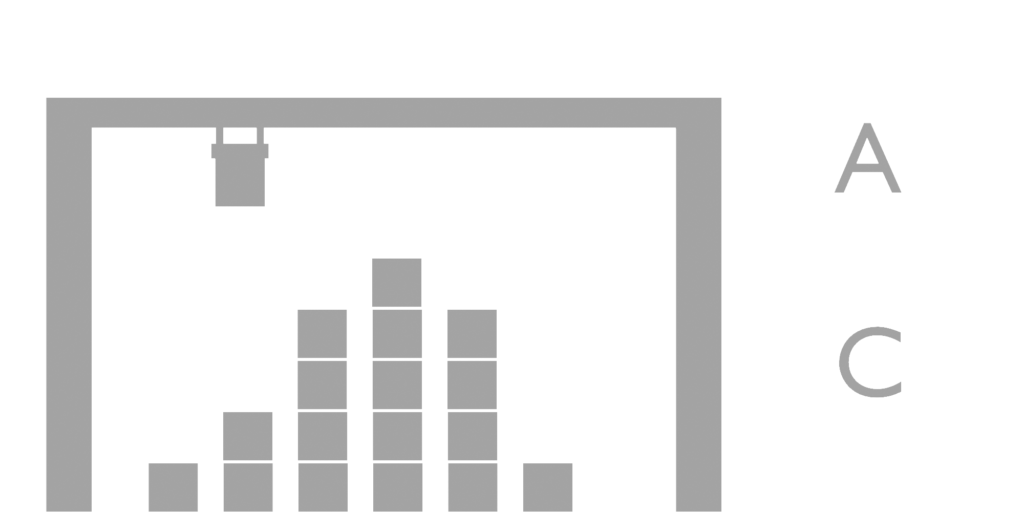

Twistlock recognition in laboratory conditions

Crane automatization can only be achieved, when location, shape and state of surrounding objects are known. These can be measured using lidar sensors, which have the advantage of precise location measurements. However, the biggest challenge with lidar sensors is the low resolution compared to RGB images. This increases the difficulty to even measure, either far away or small scale objects respectively. This project explores the required resolution for reliable recognition of small scale objects e.g. twistlocks.

Data collection and labeling in terminal environments

Machine Learning and Deep Learning algorithms require a significant amount of data. Public available benchmark data for Lidar sensors is sparse, public datasets in terminal environments are quasi non-existant. Additionally, labeling in 3D requires more time than conventional RGB images, because of the non-intuitive mechanics in 3D-Visualization. This project handles the task of collecting data in terminal environments and is solving the challenges of labeling data for machine learning application.

Twistlock localization in terminal environments

Twistlock localization in terminal environments is inherently problematic, because of the small scale of the object and the low resolution of the lidar sensor. However, the biggest problem is the selection of points, which belong to the close vicinity of the object. No clear definitions for features exist, nor is the signal strong enough for direct detection. Therefore, a processing pipeline of conventional and machine learning approaches is required to detect the object in a hierarchical manner.